Yesterday, Ahmedabad User group has organized the Global Azure Bootcamp 2016 event. It was a fun to be there. The event was very well received and attended by the group of people excited about Azure cloud platform. Thanks for hosting is Ahmedabad User Group.

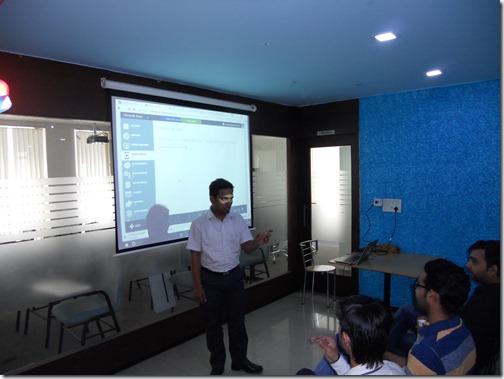

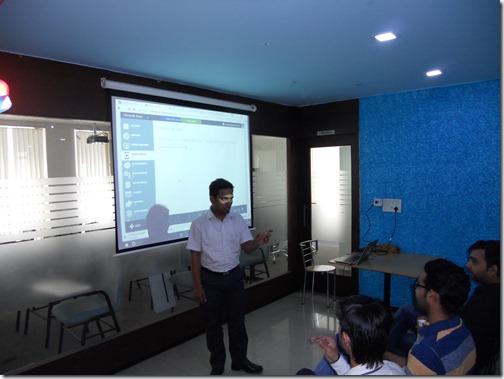

After that, It was my time to present some. I have shared my knowledge about DocumentDB with my presentation about Introduction to DocumentDB.

There well lots of interest in the audience and we had lots of conversation about it. Here is the presentation link for the same.

Here was the agenda for my presentation.

https://github.com/dotnetjalps/gab16augdemo

Due to the huge interest in people, We promise them to have some advanced level sessions about DocumentDB in forthcoming months.

After that Jagdish has taken over the stage and had presented about Azure mobile services. He presented how we can leverage Azure mobile services with Windows Phone Application and some other cool insights for Azure mobiles services.

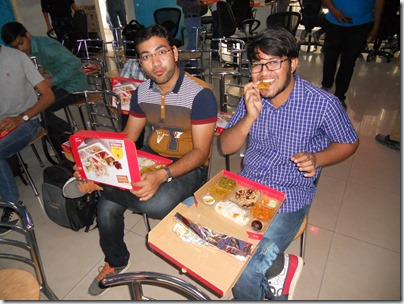

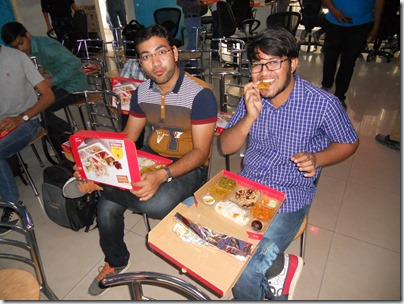

Then we had lunch and we had some great interaction and conversation about cloud technologies during lunch.

After lunch, there was post lunch session. It was time for Sanket Shah to rock the stage. He has presented about Architecting Modern solution on Azure. He had some cool demo and audience was amazed about his demo.

After that, we had another back to back session from Kaushal Bhavsar. He had presented about how we can create a secure two factors authentications with Azure technologies. He had some given some cool demo using Azure Active directory and Office 365 account authentication about it.

After that we had tea and It’s time for Ahmedabad User group president Mahesh Dhola to rock the stage. Instead of giving demo he chooses to use WhiteBoard and given presentation DevOps and Microsoft Azure. It was fun watching is him presenting the topic that we all love.

After the presentation, it was the time where everybody wants to get some prize. We had the lucky draw and almost everybody gets price.

And this guy was an inspiration for all us.

Then we has some photos of all the speakers. We missed Jagdish here as he needs to go home for some personal work.

Overall, It was a fun event. We had some much fun hacking and learning something new. Thank you, Microsoft and Ahmedabad user group for hosting such a nice event.

Keep rocking!.

Event Recap:

In the morning, Event is started around 10:00 am. Mahesh had made all attendees comfortable and given some insights about Azure platform.

After that, It was my time to present some. I have shared my knowledge about DocumentDB with my presentation about Introduction to DocumentDB.

There well lots of interest in the audience and we had lots of conversation about it. Here is the presentation link for the same.

Here was the agenda for my presentation.

- What is document databases?

- What is DocumentDB?

- Why we should use DocumentDB?

- How we can use DocumentDB?

https://github.com/dotnetjalps/gab16augdemo

Due to the huge interest in people, We promise them to have some advanced level sessions about DocumentDB in forthcoming months.

After that Jagdish has taken over the stage and had presented about Azure mobile services. He presented how we can leverage Azure mobile services with Windows Phone Application and some other cool insights for Azure mobiles services.

Then we had lunch and we had some great interaction and conversation about cloud technologies during lunch.

After lunch, there was post lunch session. It was time for Sanket Shah to rock the stage. He has presented about Architecting Modern solution on Azure. He had some cool demo and audience was amazed about his demo.

After that, we had another back to back session from Kaushal Bhavsar. He had presented about how we can create a secure two factors authentications with Azure technologies. He had some given some cool demo using Azure Active directory and Office 365 account authentication about it.

After that we had tea and It’s time for Ahmedabad User group president Mahesh Dhola to rock the stage. Instead of giving demo he chooses to use WhiteBoard and given presentation DevOps and Microsoft Azure. It was fun watching is him presenting the topic that we all love.

After the presentation, it was the time where everybody wants to get some prize. We had the lucky draw and almost everybody gets price.

And this guy was an inspiration for all us.

Then we has some photos of all the speakers. We missed Jagdish here as he needs to go home for some personal work.

Overall, It was a fun event. We had some much fun hacking and learning something new. Thank you, Microsoft and Ahmedabad user group for hosting such a nice event.

Keep rocking!.